#include <fused_tensor.h>

Public Types | |

| using | value_type = T |

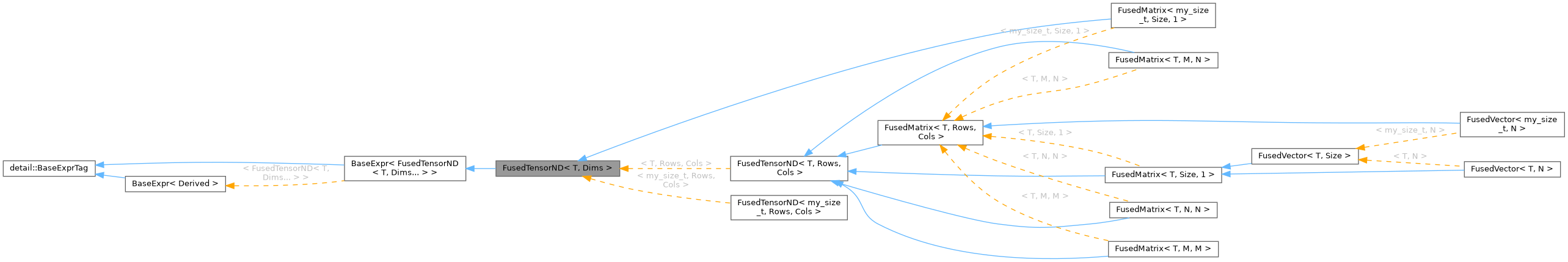

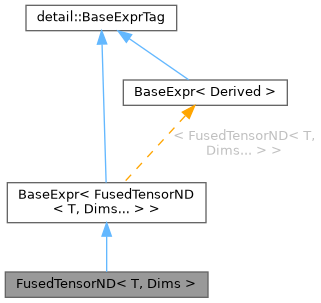

| using | Self = FusedTensorND< T, Dims... > |

| using | Layout = StridedLayoutConstExpr< typename AccessPolicy::PadPolicy > |

Public Member Functions | |

| FusedTensorND () noexcept=default | |

| FusedTensorND (T initValue) noexcept | |

| FusedTensorND (const FusedTensorND &other) noexcept | |

| FusedTensorND (FusedTensorND &&other) noexcept | |

| template<typename Output > | |

| bool | may_alias (const Output &output) const noexcept |

| template<typename Expr > | |

| FusedTensorND & | operator= (const BaseExpr< Expr > &expr) |

| template<typename T_ , my_size_t Bits, typename Arch > | |

| Microkernel< T_, Bits, Arch >::VecType | evalu (my_size_t flat) const noexcept |

| template<typename T_ , my_size_t Bits, typename Arch > | |

| FORCE_INLINE Microkernel< T_, Bits, Arch >::VecType | logical_evalu (my_size_t logical_flat) const noexcept |

| Evaluate at a LOGICAL flat index. | |

| FusedTensorND & | operator= (const FusedTensorND &other) noexcept |

| FusedTensorND & | operator= (FusedTensorND &&other) noexcept |

| template<typename... Indices> requires (sizeof...(Indices) == NumDims) | |

| T & | operator() (Indices... indices) TESSERACT_CONDITIONAL_NOEXCEPT |

| template<typename... Indices> requires (sizeof...(Indices) == NumDims) | |

| const T & | operator() (Indices... indices) const TESSERACT_CONDITIONAL_NOEXCEPT |

| T & | operator() (const my_size_t *indices) TESSERACT_CONDITIONAL_NOEXCEPT |

| const T & | operator() (const my_size_t *indices) const TESSERACT_CONDITIONAL_NOEXCEPT |

| T & | operator() (my_size_t(&indices)[NumDims]) TESSERACT_CONDITIONAL_NOEXCEPT |

| const T & | operator() (my_size_t(&indices)[NumDims]) const TESSERACT_CONDITIONAL_NOEXCEPT |

| bool | isIdentity () const |

| template<my_size_t... Perm> | |

| FORCE_INLINE auto | transpose_view () const noexcept |

| FORCE_INLINE auto | transpose_view (void) const noexcept |

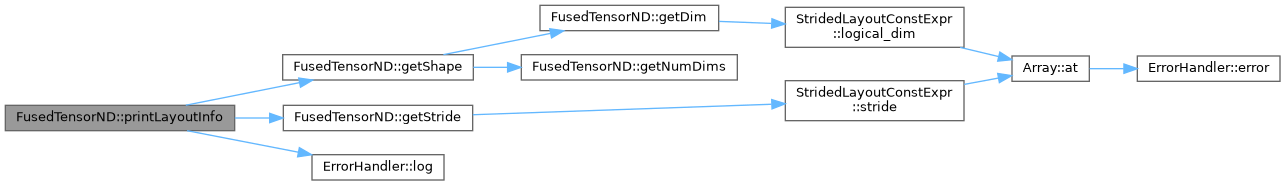

| std::string | getShape () const |

| FusedTensorND & | setToZero (void) noexcept |

| FusedTensorND & | setHomogen (T _val) noexcept |

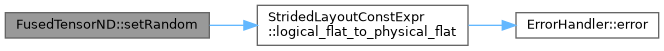

| FusedTensorND & | setRandom (T _maxRand, T _minRand) |

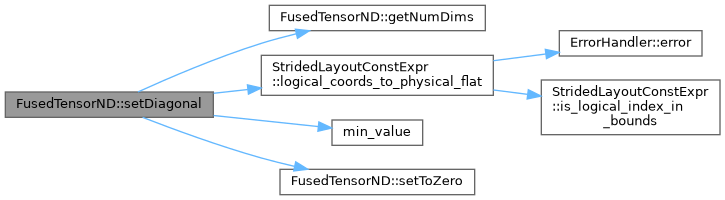

| FusedTensorND & | setDiagonal (T _val) |

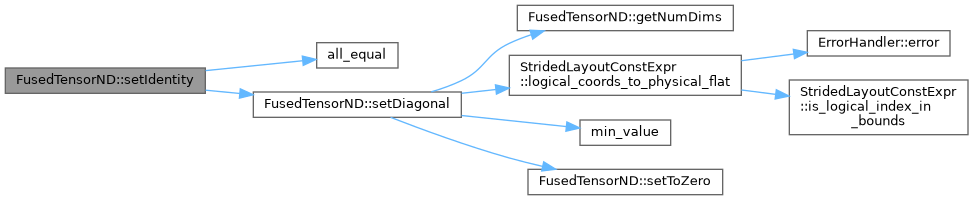

| FusedTensorND & | setIdentity (void) |

| FusedTensorND & | setSequencial (void) |

| template<my_size_t DiagonalSize> | |

| void | getDiagonalEntries (FusedTensorND< T, DiagonalSize, 1 > &diagonalEntries) const |

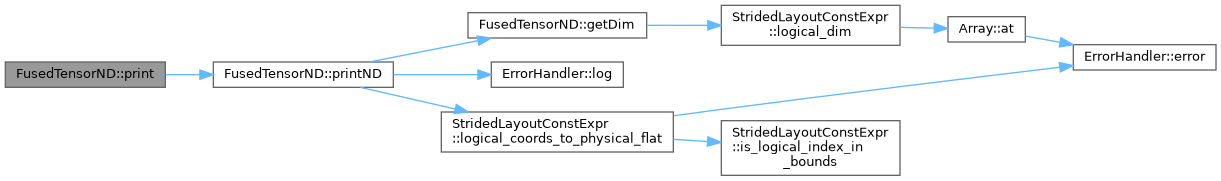

| void | print (bool with_padding=false) const |

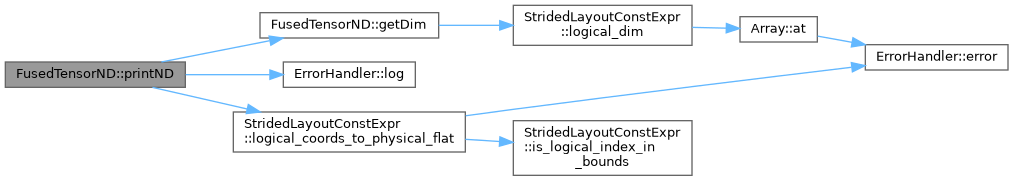

| void | printND (bool showPadding=false) const |

| Print tensor of arbitrary dimensions. | |

| void | printLayoutInfo () const |

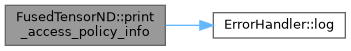

| void | print_access_policy_info () const |

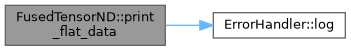

| void | print_flat_data () const |

| FORCE_INLINE constexpr const T * | data () const noexcept |

| FORCE_INLINE constexpr T * | data () noexcept |

Public Member Functions inherited from BaseExpr< FusedTensorND< T, Dims... > > Public Member Functions inherited from BaseExpr< FusedTensorND< T, Dims... > > | |

| const FusedTensorND< T, Dims... > & | derived () const |

Static Public Member Functions | |

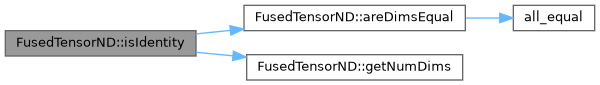

| static constexpr bool | areDimsEqual () |

| static FORCE_INLINE constexpr my_size_t | getTotalSize () noexcept |

| static FORCE_INLINE constexpr my_size_t | getNumDims () noexcept |

| template<typename LeftExpr , typename RightExpr > requires (expression::traits<LeftExpr>::IsPhysical && expression::traits<RightExpr>::IsPhysical) | |

| static FusedTensorND | einsum (const BaseExpr< LeftExpr > &_tensor1, const BaseExpr< RightExpr > &_tensor2, const my_size_t a, const my_size_t b) |

| Contract two tensors along specified axes using SIMD dot products. | |

| static FORCE_INLINE constexpr my_size_t | getDim (my_size_t i) TESSERACT_CONDITIONAL_NOEXCEPT |

| static FORCE_INLINE constexpr my_size_t | getStride (my_size_t i) TESSERACT_CONDITIONAL_NOEXCEPT |

Static Public Attributes | |

| static constexpr my_size_t | NumDims = sizeof...(Dims) |

| static constexpr my_size_t | Dim [] = {Dims...} |

| static constexpr my_size_t | TotalSize = (Dims * ...) |

Friends | |

| template<typename , my_size_t... > | |

| class | PermutedViewConstExpr |

Member Typedef Documentation

◆ Layout

| using FusedTensorND< T, Dims >::Layout = StridedLayoutConstExpr<typename AccessPolicy::PadPolicy> |

◆ Self

| using FusedTensorND< T, Dims >::Self = FusedTensorND<T, Dims...> |

◆ value_type

| using FusedTensorND< T, Dims >::value_type = T |

Constructor & Destructor Documentation

◆ FusedTensorND() [1/4]

|

defaultnoexcept |

◆ FusedTensorND() [2/4]

|

inlineexplicitnoexcept |

◆ FusedTensorND() [3/4]

|

inlinenoexcept |

◆ FusedTensorND() [4/4]

|

inlinenoexcept |

Member Function Documentation

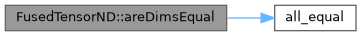

◆ areDimsEqual()

|

inlinestaticconstexpr |

◆ data() [1/2]

|

inlineconstexprnoexcept |

◆ data() [2/2]

|

inlineconstexprnoexcept |

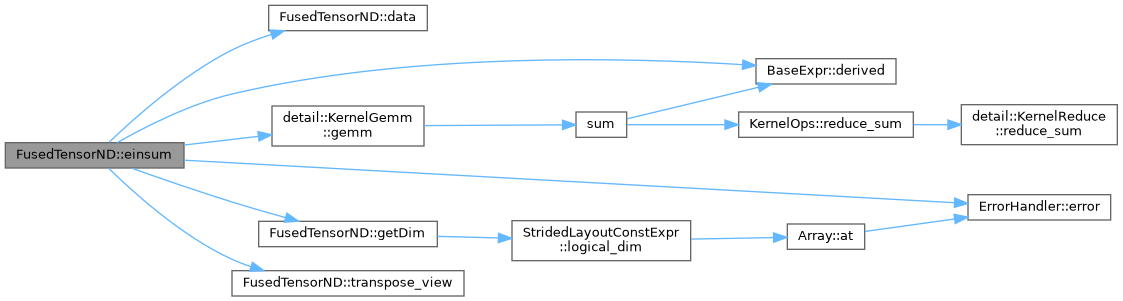

◆ einsum()

requires (expression::traits<LeftExpr>::IsPhysical && expression::traits<RightExpr>::IsPhysical)

|

inlinestatic |

Contract two tensors along specified axes using SIMD dot products.

For 2D tensors, always dispatches to register-blocked GEMM by materializing transposed copies when needed. The O(N²) transpose cost is negligible vs O(N³) multiply, and the materialized tensor has proper SIMD-aligned padding for aligned K::load in the micro-kernel.

For higher-dimensional tensors, falls back to generic stride-mapped per-element dot products.

2D GEMM — 4 CASES (contract axis a from tensor1, axis b from tensor2)

a=1, b=0: C[M,N] = A[M,K] × B[K,N] — favorable, no transpose a=0, b=0: C[K,N] = A^T[K,M] × B[M,N] — transpose A a=1, b=1: C[M,K] = A[M,N] × B^T[N,K] — transpose B a=0, b=1: C[K,K'] = A^T × B^T — transpose both

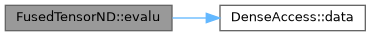

◆ evalu()

|

inlinenoexcept |

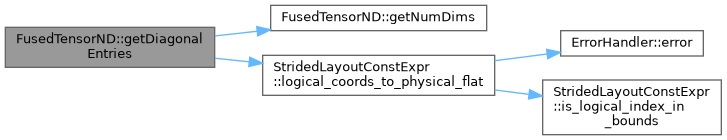

◆ getDiagonalEntries()

|

inline |

◆ getDim()

|

inlinestaticconstexpr |

◆ getNumDims()

|

inlinestaticconstexprnoexcept |

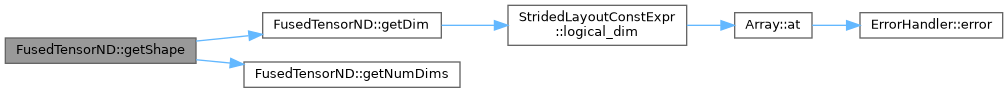

◆ getShape()

|

inline |

◆ getStride()

|

inlinestaticconstexpr |

◆ getTotalSize()

|

inlinestaticconstexprnoexcept |

◆ isIdentity()

|

inline |

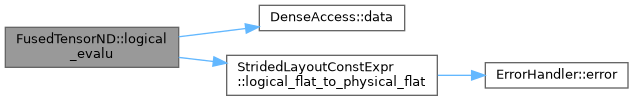

◆ logical_evalu()

|

inlinenoexcept |

Evaluate at a LOGICAL flat index.

Unlike evalu (which takes physical offsets), this converts logical flat → physical flat via Layout, handling padding gaps. Uses gather for SIMD widths > 1 since consecutive logical flats are not contiguous in physical memory when padding exists.

◆ may_alias()

|

inlinenoexcept |

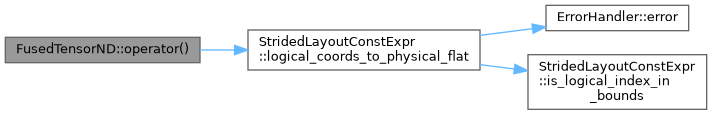

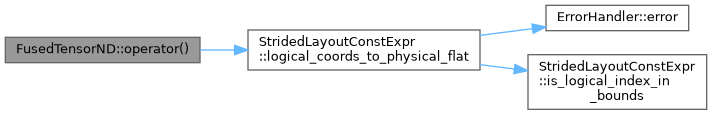

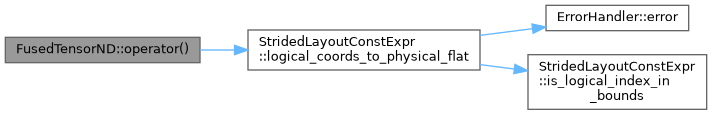

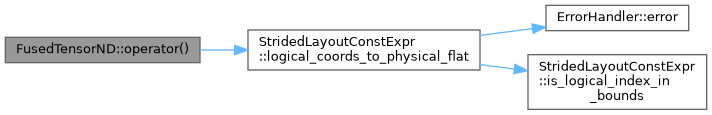

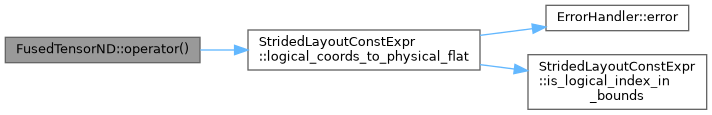

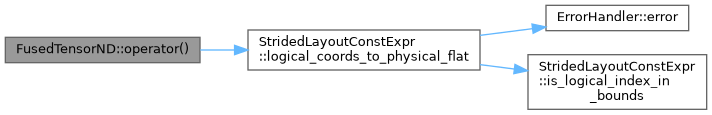

◆ operator()() [1/6]

|

inline |

◆ operator()() [2/6]

|

inline |

◆ operator()() [3/6]

requires (sizeof...(Indices) == NumDims)

|

inline |

◆ operator()() [4/6]

requires (sizeof...(Indices) == NumDims)

|

inline |

◆ operator()() [5/6]

|

inline |

◆ operator()() [6/6]

|

inline |

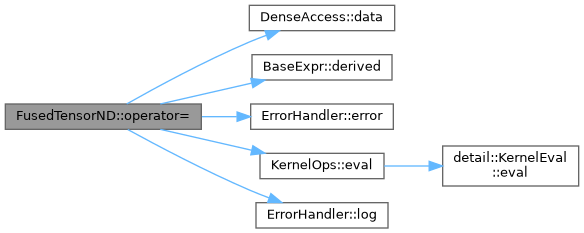

◆ operator=() [1/3]

|

inline |

◆ operator=() [2/3]

|

inlinenoexcept |

◆ operator=() [3/3]

|

inlinenoexcept |

◆ print()

|

inline |

◆ print_access_policy_info()

|

inline |

◆ print_flat_data()

|

inline |

◆ printLayoutInfo()

|

inline |

◆ printND()

|

inline |

Print tensor of arbitrary dimensions.

Convention: last 2 dims are (rows, cols), earlier dims are slice indices. For 1D: prints a single row. For 2D: prints a matrix. For 3D+: prints labeled 2D slices.

- Parameters

-

showPadding If true, show padding elements after a '|' separator.

◆ setDiagonal()

|

inline |

◆ setHomogen()

|

inlinenoexcept |

Safe to fill entire physical buffer with the same value

◆ setIdentity()

|

inline |

◆ setRandom()

|

inline |

◆ setSequencial()

|

inline |

◆ setToZero()

|

inlinenoexcept |

◆ transpose_view() [1/2]

|

inlinenoexcept |

◆ transpose_view() [2/2]

|

inlinenoexcept |

Friends And Related Symbol Documentation

◆ PermutedViewConstExpr

|

friend |

Member Data Documentation

◆ Dim

|

staticconstexpr |

◆ NumDims

|

staticconstexpr |

◆ TotalSize

|

staticconstexpr |

The documentation for this class was generated from the following files:

- core/include/algebra/fused_tensor_algebraic_traits.h

- core/include/fused/fused_tensor.h