Reduction operations — min, max, sum over expression elements. More...

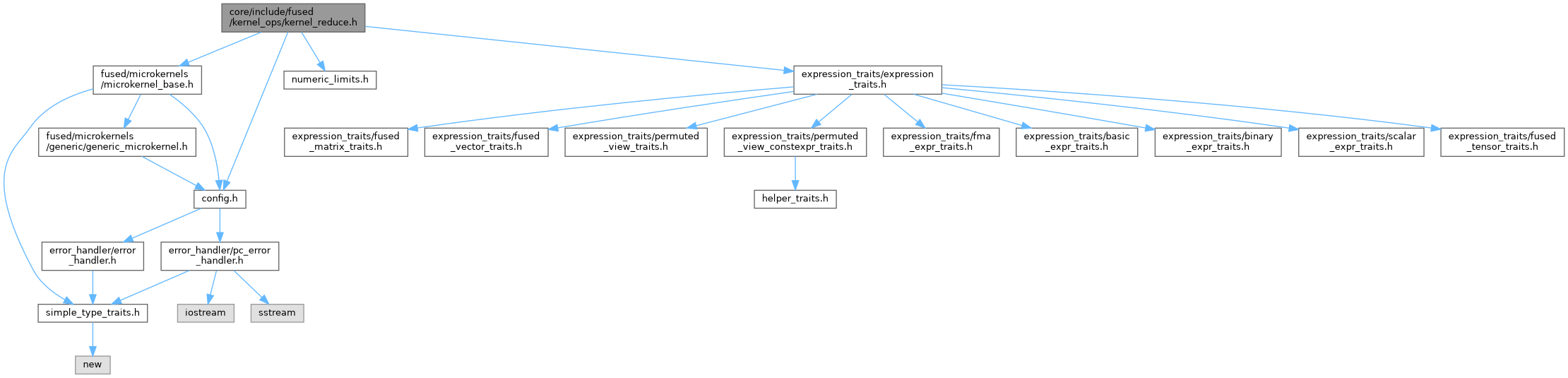

#include "config.h"#include "fused/microkernels/microkernel_base.h"#include "numeric_limits.h"#include "expression_traits/expression_traits.h"

Go to the source code of this file.

Classes | |

| struct | detail::KernelReduce< T, Bits, Arch > |

Namespaces | |

| namespace | detail |

Enumerations | |

| enum class | detail::ReduceOp { detail::Min , detail::Max , detail::Sum } |

Detailed Description

Reduction operations — min, max, sum over expression elements.

Parameterized on ReduceOp enum. Dispatches based on expression layout:

- Contiguous: physical slice iteration, SIMD + scalar tail

- Logical: flat logical index iteration, scalar only (for permuted views)

STRATEGY (contiguous path)

Physical memory is organized as numSlices × paddedLastDim, where only the first lastDim elements per slice are logical data:

slice 0: [d d d d d P P P] slice 1: [d d d d d P P P] d = data, P = padding slice 2: [d d d d d P P P] |← lastDim→| |← paddedLastDim →|

For a 3D tensor [2, 3, 5], numSlices = 2*3 = 6: slice 0 = [0,0,:] slice 3 = [1,0,:] slice 1 = [0,1,:] slice 4 = [1,1,:] slice 2 = [0,2,:] slice 5 = [1,2,:]

Per slice, SIMD processes simdWidth-aligned chunks, then a scalar tail handles the remainder. Padding is never read.

GENERICARCH (SimdWidth=1): no padding, simdSteps=lastDim, no scalar tail.